内容目录

requests 是 python 的一个 HTTP 客户端库,跟 urllib,urllib2 类似,那为什么要用 requests 而不用 urllib2 呢?官方文档中是这样说明的:

1. 安装

2. 小试牛刀

>>>import requests >>> r = requests.get('http://www.zhidaow.com') # 发送请求 >>> r.status_code # 返回码 200 >>> r.headers['content-type'] # 返回头部信息 'text/html; charset=utf8' >>> r.encoding # 编码信息 'utf-8' >>> r.text #内容部分(PS,由于编码问题,建议这里使用 r.content) u'<!DOCTYPE html>n<html xmlns="http://www.w3.org/1999/xhtml"...' ...

3. 快速指南

3.1 发送请求

>>>import requests

>>>r = requests.get('http://www.zhidaow.com')

>>> r = requests.post("http://httpbin.org/post") >>> r = requests.put("http://httpbin.org/put") >>> r = requests.delete("http://httpbin.org/delete") >>> r = requests.head("http://httpbin.org/get") >>> r = requests.options("http://httpbin.org/get")

3.2 在 URLs 中传递参数

>>> payload = {'wd': '张亚楠', 'rn': '100'} >>> r = requests.get("http://www.baidu.com/s", params=payload) >>> print r.url u'http://www.baidu.com/s?rn=100&wd=%E5%BC%A0%E4%BA%9A%E6%A5%A0'

3.3 获取响应内容

>>> r = requests.get('https://www.zhidaow.com') >>> r.text u'<!DOCTYPE html>n<html xmlns="http://www.w3.org/1999/xhtml"...'

>>> r = requests.get('https://www.zhidaow.com') >>> r.content b'<!DOCTYPE html>n<html xmlns="http://www.w3.org/1999/xhtml"...'

3.4 获取网页编码

>>> r = requests.get('http://www.zhidaow.com') >>> r.encoding 'utf-8'

>>> r = requests.get('http://www.zhidaow.com') >>> r.encoding 'utf-8' >>>r.encoding = 'ISO-8859-1'

3.5 json

>>>r = requests.get('http://ip.taobao.com/service/getIpInfo.php?ip=122.88.60.28') >>>r.json()['data']['country'] '中国'

3.6 网页状态码

>>>r = requests.get('http://www.mengtiankong.com') >>>r.status_code 200 >>>r = requests.get('http://www.mengtiankong.com/123123/') >>>r.status_code 404 >>>r = requests.get('http://www.baidu.com/link?url=QeTRFOS7TuUQRppa0wlTJJr6FfIYI1DJprJukx4Qy0XnsDO_s9baoO8u1wvjxgqN') >>>r.url u'http://www.zhidaow.com/ >>>r.status_code 200

>>>r.history (<Response [302]>,)

>>>r = requests.get('http://www.baidu.com/link?url=QeTRFOS7TuUQRppa0wlTJJr6FfIYI1DJprJukx4Qy0XnsDO_s9baoO8u1wvjxgqN', allow_redirects = False) >>>r.status_code 302

3.7 响应头内容

>>>r = requests.get('http://www.zhidaow.com') >>> r.headers { 'content-encoding': 'gzip', 'transfer-encoding': 'chunked', 'content-type': 'text/html; charset=utf-8'; ... }

>>> r.headers['Content-Type'] 'text/html; charset=utf-8' >>> r.headers.get('content-type') 'text/html; charset=utf-8'

3.8 设置超时时间

>>> requests.get('http://github.com', timeout=0.001) Traceback (most recent call last): File "<stdin>", line 1, in <module> requests.exceptions.Timeout: HTTPConnectionPool(host='github.com', port=80): Request timed out. (timeout=0.001)

3.9 代理访问

import requests proxies = { "http": "http://10.10.1.10:3128", "https": "http://10.10.1.10:1080", } requests.get("http://www.zhidaow.com", proxies=proxies)

proxies = { "http": "http://user:pass@10.10.1.10:3128/", }

3.10 请求头内容

>>> r.request.headers {'Accept-Encoding': 'identity, deflate, compress, gzip', 'Accept': '*/*', 'User-Agent': 'python-requests/1.2.3 CPython/2.7.3 Windows/XP'}

3.11 自定义请求头部

r = requests.get('http://www.zhidaow.com') print r.request.headers['User-Agent'] #python-requests/1.2.3 CPython/2.7.3 Windows/XP headers = {'User-Agent': 'alexkh'} r = requests.get('http://www.zhidaow.com', headers = headers) print r.request.headers['User-Agent'] #alexkh

3.12 持久连接 keep-alive

4. 简单应用

4.1 获取网页返回码

def get_status(url): r = requests.get(url, allow_redirects = False) return r.status_code print get_status('http://www.zhidaow.com') #200 print get_status('http://www.zhidaow.com/hi404/') #404 print get_status('http://mengtiankong.com') #301 print get_status('http://www.baidu.com/link?url=QeTRFOS7TuUQRppa0wlTJJr6FfIYI1DJprJukx4Qy0XnsDO_s9baoO8u1wvjxgqN') #302 print get_status('http://www.huiya56.com/com8.intre.asp?46981.html') #500

后记

原文始发于:python requests 的安装与简单运用

相关文章

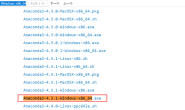

- Anaconda所有历史版本下载(0)

- ThinkPad x13 Gen1傲腾H10重装系统的麻烦(0)

- Win10系统电脑进入安全模式的四种方法,让你轻松应对各种问题(0)

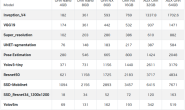

- 使用Jetson_benchmark进行性能测试(0)

- 【Python】修改Windows中 pip 的缓存位置与删除 pip 缓存(1)